If you don’t know what ChatGPT is, great! Suffice it to say it’s a kind of chat bot driven by artificial intelligence. You give it some text–for instance, a chat message–and it gives you back some text that’s meant to be a reasonable response to or elaboration of what you said to it.

There is a lot to say on this topic of whether computer software can think or write. To begin with, I voted “no” on the survey and was happy to see that so far 81% of respondents did the same. Here is the rationale I posted:

- ChatGPT does not have a conception of what is going on in the world. It is a word-emitter that tricks human minds into thinking it does. In other words, it’s a kind of complex automaton, a marionette. The fact that the action of it is complex enough to fool us into thinking it “knows” something does not mean it does

- ChatGPT is as likely to emit false information as true information (perhaps more so; has this been assessed?)

- ChatGPT does not have deductive or inductive logical reasoning capabilities; nor does it have any “drive” to follow these principles

- Human papers are for human writers to communicate to human readers. It seems to me that the only argument in favor of including ChatGPT in this process is a misguided drive to speed up the process even more than publish-or-perish has. In fact it should be slowed down and made more careful

- The present interest in ChatGPT is almost entirely driven by investor-fueled hype. It’s where investors are running after the collapse of cryptocurrency/web3. There is a nice interview with Timnit Gebru on the Tech Won’t Save Us podcast, titled “Don’t Fall for the AI Hype” that goes into this if you’re curious. As computer scientists, we should not be chasing trends like this.

I followed the list above with a second comment when one of the respondents commented that this is [[just how things are now::https://www.nature.com/articles/d41586-023-00107-z]]:

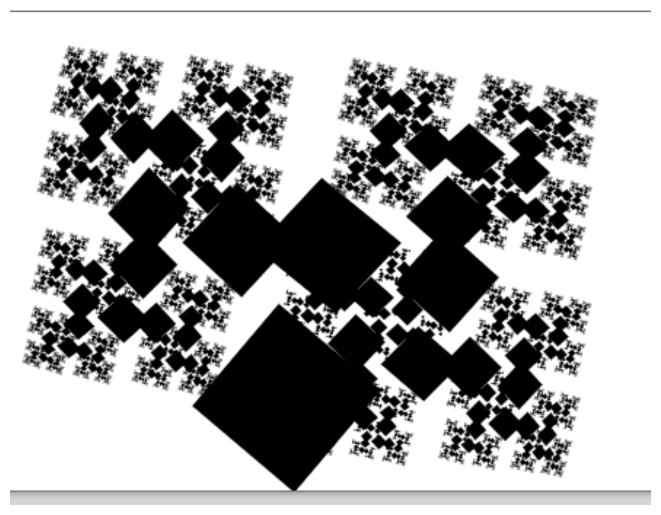

The state of the world is what we make it. As computer scientists, we have a responsibility to hold the line against this maniacal hype. It’s insane, in an almost literal sense, for us to follow the herd on this particular issue. We know better. We know all about just-so stories. We know about mechanical Turks. We’ve been through half a dozen or more AI hype cycles where the latest thing, whether it be expert systems or case-based reasoning or cybernetics or the subsumption architecture or neural networks or Bayesian inference or or or….was going to replace the human mind. None of them have done that, to a one, and ChatGPT won’t either.

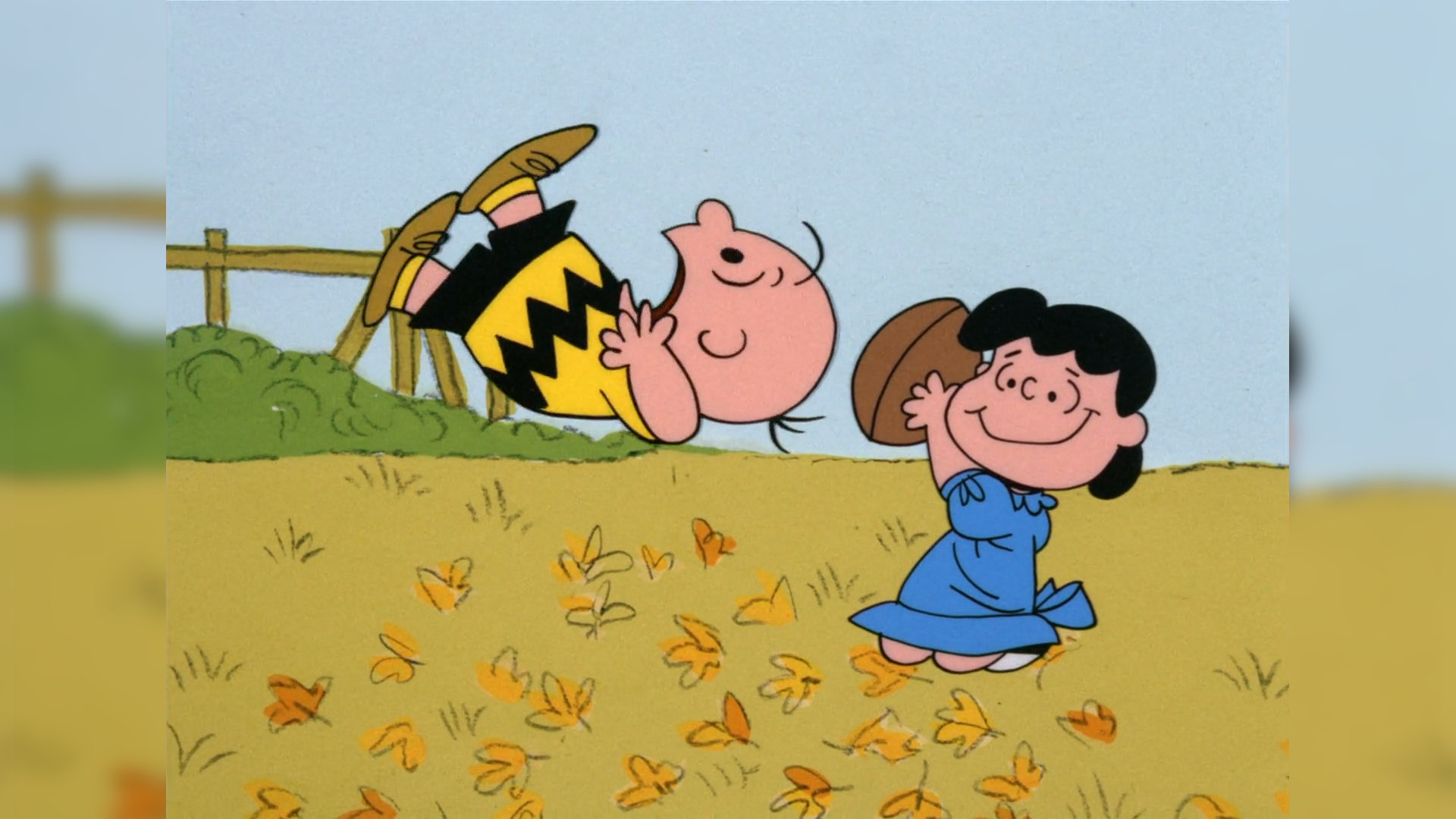

Briefly my take is this: as computer scientists in or near the field of “artificial intelligence”, we have been through this before, where some new discovery was poised to change how we think about everything. We know better, or at least we should know better. Are we really going to voluntarily do this again:

[[Who is Lucy and who is Charlie Brown in this analogy? And what is the football?::rmn]]

when we can–and I think should–opt out?

Putting aside the question of resisting hype, and putting aside that the hype is almost surely being consciously generated by rich and powerful actors who have their own reasons for forcing this conversation to happen, it's worth asking on a purely technical level what something like ChatGPT actually is capable of doing.

[[And if these systems can think and feel why are we abusing them to write marketing text or copyedit without pay?::lmn]] Without diving into the technical weeds, which I think obscures the forest, I’d offer what I think is a better, and older, and perhaps clearer question: is it possible for a text-to-text map derived solely from existing textual data to think, feel, create, or write?

[[These sorts of questions are manifestations of the sorites paradox and probably have no fully satisfying resolution::rmn]] If so, how do we explain the transition from a noisy lookup table–because that’s surely what these models are early in their training–to something that can think? Do we really believe the ability to think somehow emerges from massive data sets? When, exactly, does that transition arise? How much data is needed before the ability to think “pops”? I think we can say that at the very least these questions have barely been asked, let alone answered, and it’s premature to assert the answer before exploring the question. [[These arguments are old and not really resolved::https://plato.stanford.edu/entries/chinese-room/]].

If not–if we don’t believe ChatGPT can do anything like think–then we have to confess ChatGPT is only a complicated machine. Which is fine for what it is. There are lots of complicated machines that do wonderful things for us and ChatGPT surely has its uses too. But co-authoring computer science papers is not one of those uses because it is simply incapable of playing that role, and we should stop pretending it can by entertaining these questions.

]]>